Dua Lipa and Callum Turner have something phenomenal, don’t you agree? Case in point: the “Physical” singer and Masters of

LATEST NEWS

TECHNOLOGY

Overwhelmed? 6 ways to stop small stresses at work from becoming big problems

akinbostanci/Getty Images Modern professionals have busy workloads and juggling all these demands is tough, especially when unexpected challenges appear on

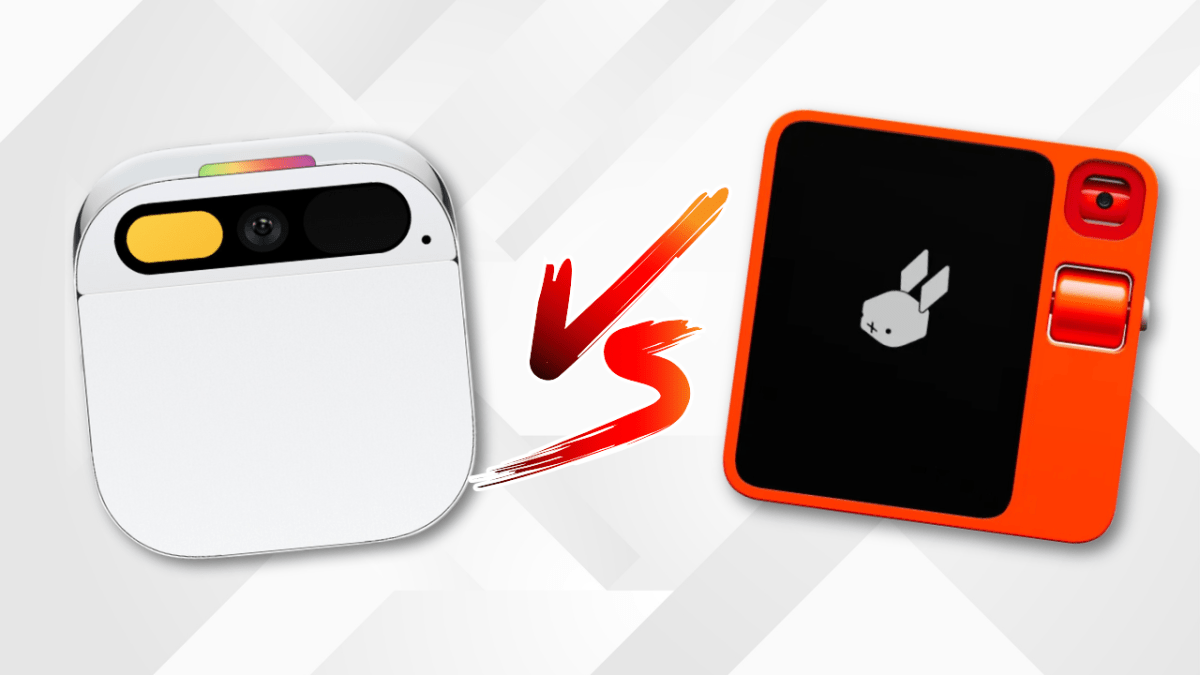

Watch: Between Rabbit’s R1 vs Humane’s Ai Pin, which had the best launch?

After a successful unveiling at CES, Rabbit is letting journalists try out the R1 — a small orange gadget with

A Costco membership is just $20 with this deal

A new Costco membership comes with a free $40 gift card right now. StackSocial Thinking about buying a Costco membership?

Thoma Bravo to take UK cybersecurity company Darktrace private in $5B deal

Darktrace is set to go private in a deal that values the U.K.-based cybersecurity giant at around $5 billion. A

What is Matter? How the connectivity standard can change your smart home

Matter has received much attention in the Internet of Things (IoT) arena since its announcement in late 2019. The CSA, the

World

Australian students join protests for Palestine | Protests

NewsFeed Students from universities in Austraila are joining their American peers in protests for Palestine. Like demonstrators on campuses in