Morgan Wallen is taking accountability in his arrest. Nearly two weeks after he was booked on reckless endangerment and disorderly

LATEST NEWS

LATEST NEWS

TECHNOLOGY

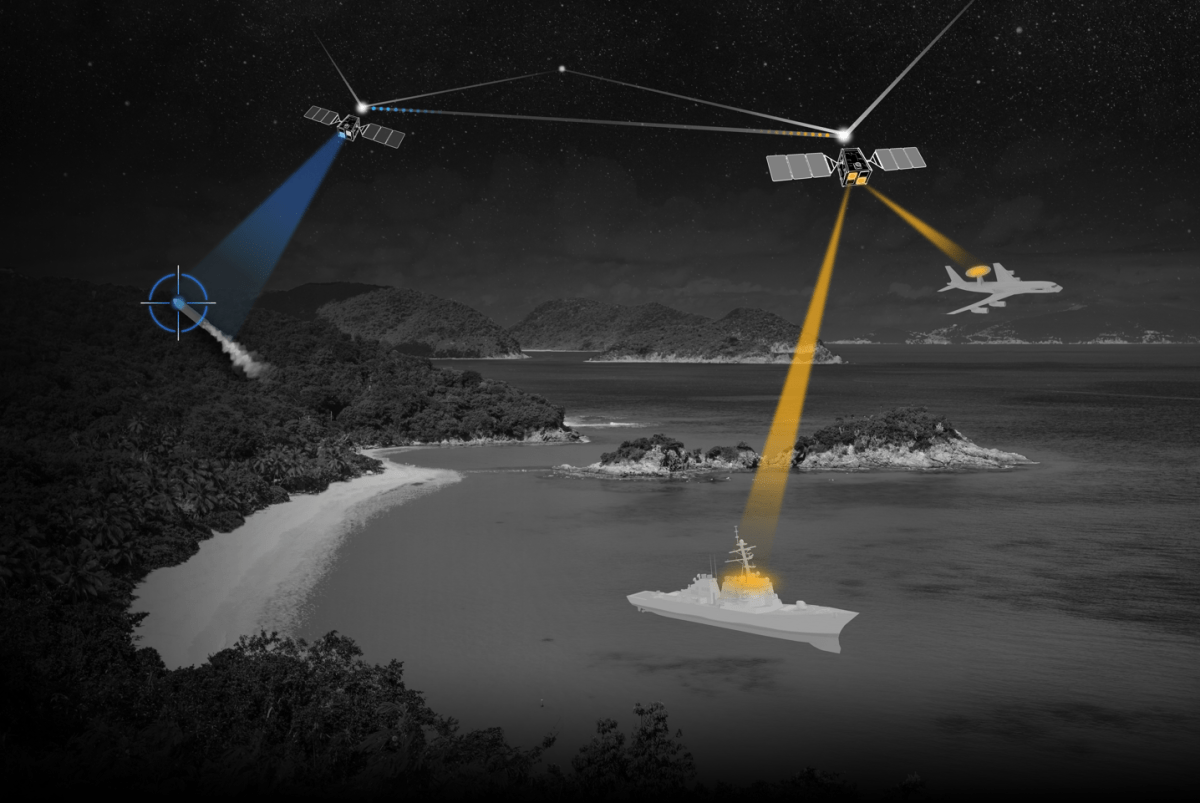

CesiumAstro claims former exec spilled trade secrets to upstart competitor AnySignal

CesiumAstro alleges in a newly filed lawsuit that a former executive disclosed trade secrets and confidential information about sensitive tech,

Your Android phone could have stalkerware — here’s how to remove it

Consumer-grade spyware apps that covertly and continually monitor your private messages, photos, phone calls and real-time location are a growing

10 iPhone settings I changed to dramatically improve battery life

Max Buondonno/ZDNET No matter how much you use your iPhone, you’ve almost certainly thought about how to maximize its battery

The best wireless video doorbell for Ring fans is 20% off right now

Maria Diaz/ZDNET What’s the deal? The Ring Battery Doorbell Plus is discounted for $120 as part of a limited-time Amazon

Buy Windows 11 Pro for just $40 right now

Get Windows 11 Pro at a big discount right now. StackSocial Need an operating system upgrade? Windows 11 Pro puts

World

North Korea conducts test on new ‘super-large warhead’: State media | Weapons News

Pyongyang says new warhead designed for cruise missiles, adding that a new anti-aircraft rocket was also tested. North Korea has